- AI Engineering

- Posts

- OpenHarness: The Missing Layer for AI Agents

OpenHarness: The Missing Layer for AI Agents

... PLUS: Give Your AI Agents a Wallet

In today’s newsletter:

Give Your AI Agents a Wallet

OpenHarness: Turn Any LLM Into a Working Agent

Make AI Agents to Engineer, Not Just Code

Reading time: 5 minutes.

Every time your agent needs to call a paid API, you stop to handle it. Set up credentials, manage API keys, configure billing, and approve the transaction. Manual overhead, every single time.

mcpc, Apify's open-source CLI client for MCP, supports autonomous, agentic payments. Agents can pay for services mid-task, no human approval needed.

How mcpc Solves It

mcpc gives agents a crypto wallet funded with USDC. When your agent needs a paid service, it pays directly and continues.

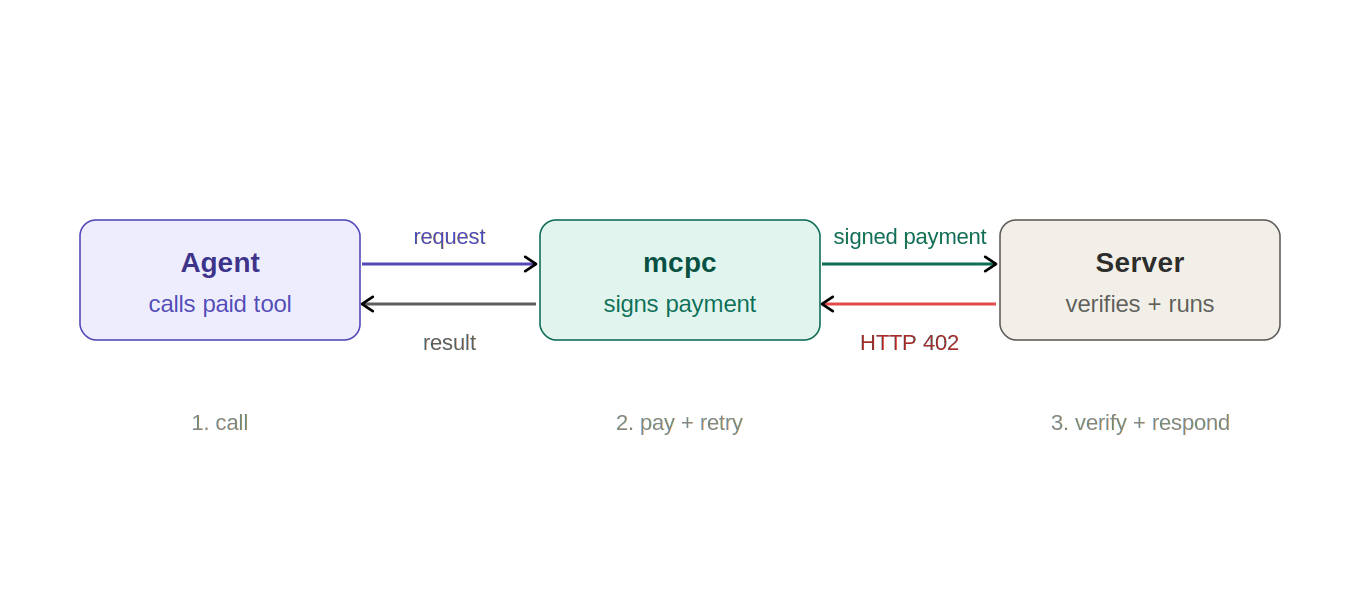

The payment happens within the HTTP request itself, using the x402 payment protocol: the server returns a 402 status with payment details, mcpc signs and retries, the server verifies and responds.

How It Works

When an agent calls a paid API, the server responds with what it costs and where to pay. mcpc signs the payment from the agent's wallet and retries the request automatically. The server verifies the payment and returns the result. The agent never pauses. The whole exchange takes under 3 seconds.

Full workflow:

Agent calls a paid tool via mcpc

Server returns 402 with payment details

mcpc signs the payment automatically using its wallet

Server verifies and runs the tool

Agent gets results and continues

Setup

Create a wallet with mcpc, fund it with USDC on Base, and connect to x402-compatible services. The agent handles every payment from there.

mcpc works with Claude Code, Codex, and any MCP-compatible agent. Apify Actors (web scrapers, automation tools) are available via x402 today.

This is what autonomous actually means. Not an agent that pauses at every paywall and waits for a human. One that pays and keeps working.

An LLM is not an agent by itself. It can reason. It can write code. It can plan. But it cannot act. It has no way to read files, run commands, call APIs, remember what it did last session, or coordinate with other models.

To actually perform actions, a model needs infrastructure around it called an agent harness. The model provides intelligence. The harness provides everything else.

Without one, you are building all of that yourself from scratch every time.

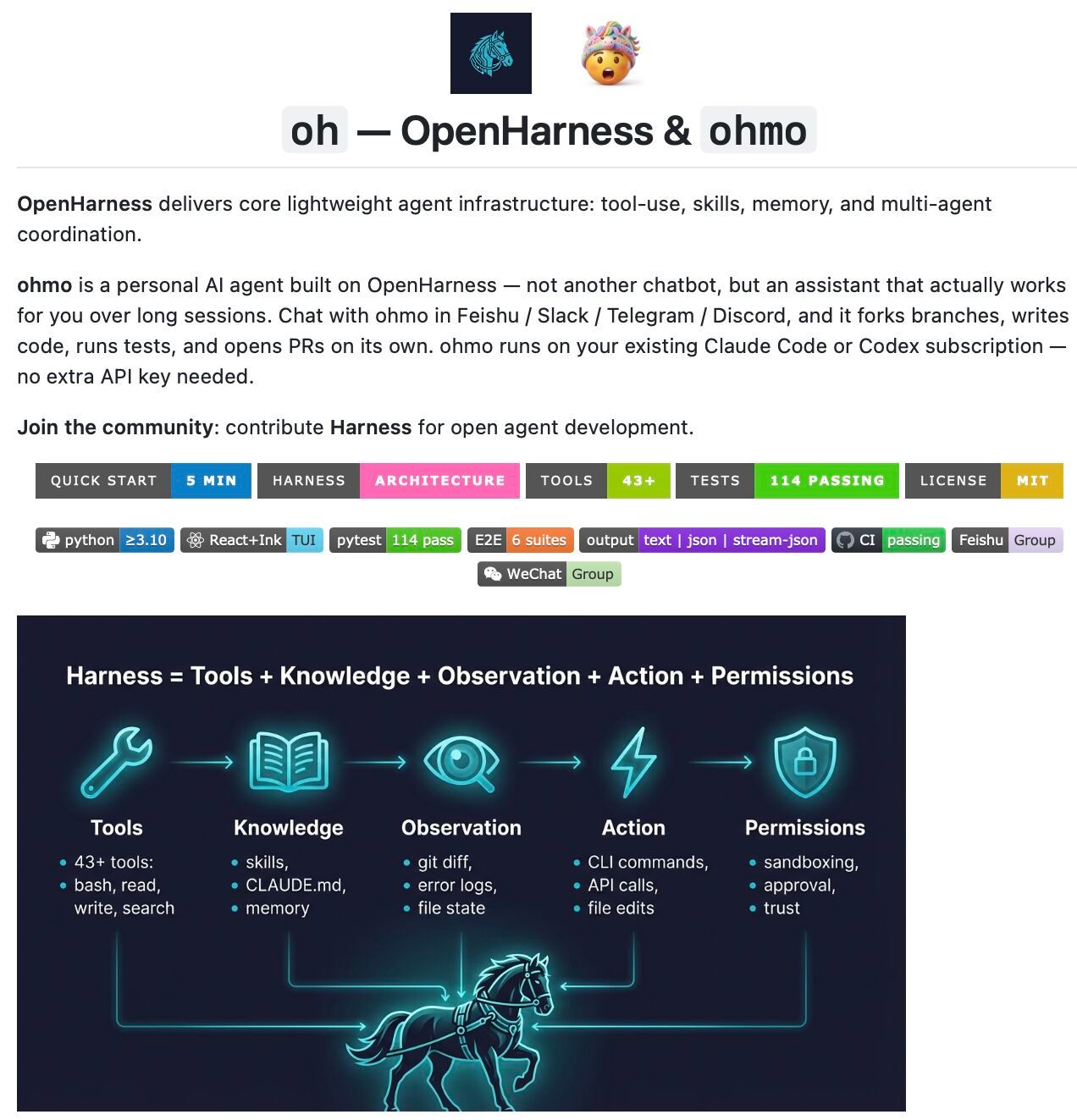

OpenHarness is an open-source agent harness built to give LLMs everything they need to operate as real agents.

You can use it to experiment with tools, skills, and agent coordination patterns, extend it with custom plugins and providers, or build specialized agents on top of its architecture.

What OpenHarness Actually Gives You

Agent Loop: Runs a continuous cycle: the model receives a prompt, decides on an action, the harness executes it, and the result feeds back to the model.

Harness Toolkit: 43 tools across File, Shell, Search, Web, and MCP. On-Demand Loading pulls in domain knowledge from markdown files when needed.

Context and Memory: OpenHarness uses MEMORY.md for persistent cross-session knowledge, CLAUDE.md Discovery and Injection for project context.

Governance: Controlling access to tools and actions, requiring approvals for sensitive operations, and monitoring every step before and after execution.

Swarm Coordination: One agent can spawn a team, delegate background tasks to individual members, and track their progress across multiple agents.

ohmo: a personal agent built on OpenHarness

The repo ships with ohmo, a ready-to-use personal agent. It runs inside Feishu, Slack, Telegram, or Discord.

Give it a task, and it forks a branch, writes the code, runs tests, and opens a pull request.

It works on your existing Claude Code or Codex subscription. No separate API key needed.

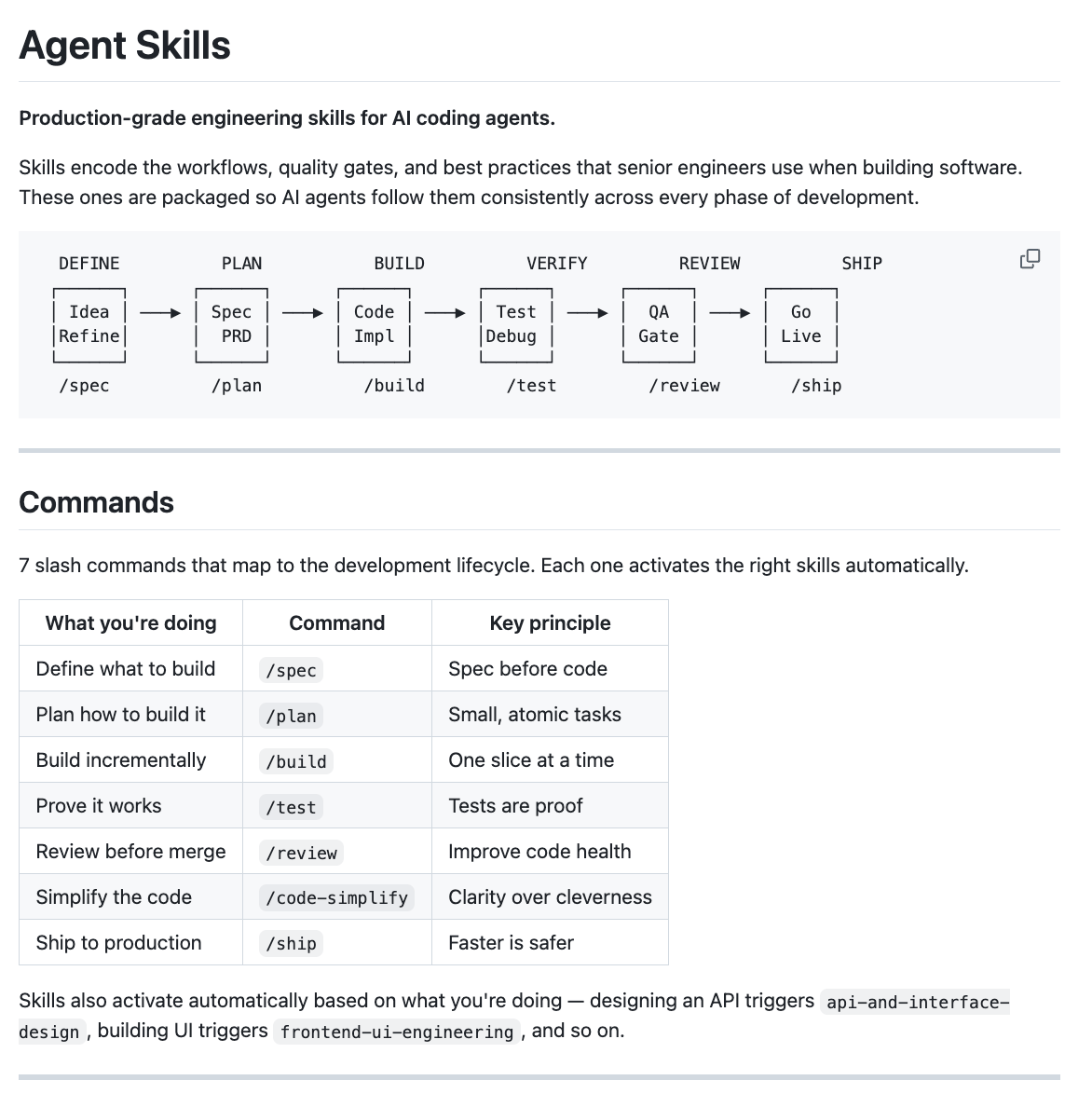

AI coding agents often optimize for the shortest path to a working result. They skip the spec, skip the tests, skip the review, and get to the code as quickly as possible.

The output is often plausible, sometimes correct, and rarely production-ready. Addy Osmani, of the Google Chrome team, built Agent Skills to fix this.

What Are Agent Skills?

An agent skill is a structured instruction that tells an AI agent not just what to do, but how to do it consistently. Not a prompt. A workflow with steps, checkpoints, and exit criteria.

The difference matters. A prompt saying "always write tests" is easy for an agent to rationalize around.

A skill that defines a Red-Green-Refactor cycle, specifies where tests live, and requires passing coverage before moving on is not.

Skills encode process the same way a senior engineer carries institutional knowledge: not as advice, but as habit.

As agents take on more complex, multi-step work, the quality of the skills around them increasingly determines the quality of the output.

What Addy Built

It's a collection of 20 skills covering the full development lifecycle, each one a Markdown file encoding a specific engineering workflow. Seven slash commands activate the right ones automatically:

/specbefore writing any code/planto break the spec into atomic tasks/buildto implement one slice at a time/testto prove the implementation works/reviewbefore merging/shipto deploy with confidence

Why It Works Across Tools

Skills are plain Markdown. They work with any agent that accepts system prompts or instruction files. Claude Code, Cursor, Gemini CLI, Windsurf, GitHub Copilot, and Kiro all have setup guides.

Skills use progressive disclosure so only the relevant parts load at each step, keeping token usage low.

The shift from prompts to structured workflows is the same shift that happened in software engineering decades ago.

Writing down what you wanted wasn't enough. You needed process, review gates, and verification to produce reliable software consistently.

Agent Skills applies the same logic to agents.

That’s all for today. Thank you for reading today’s edition. See you in the next issue with more AI Engineering insights.

PS: We curate this AI Engineering content for free, and your support means everything. If you find value in what you read, consider sharing it with a friend or two.

Your feedback is valuable: If there’s a topic you’re stuck on or curious about, reply to this email. We’re building this for you, and your feedback helps shape what we send.

WORK WITH US

Looking to promote your company, product, or service to 200K+ AI developers? Get in touch today by replying to this email.